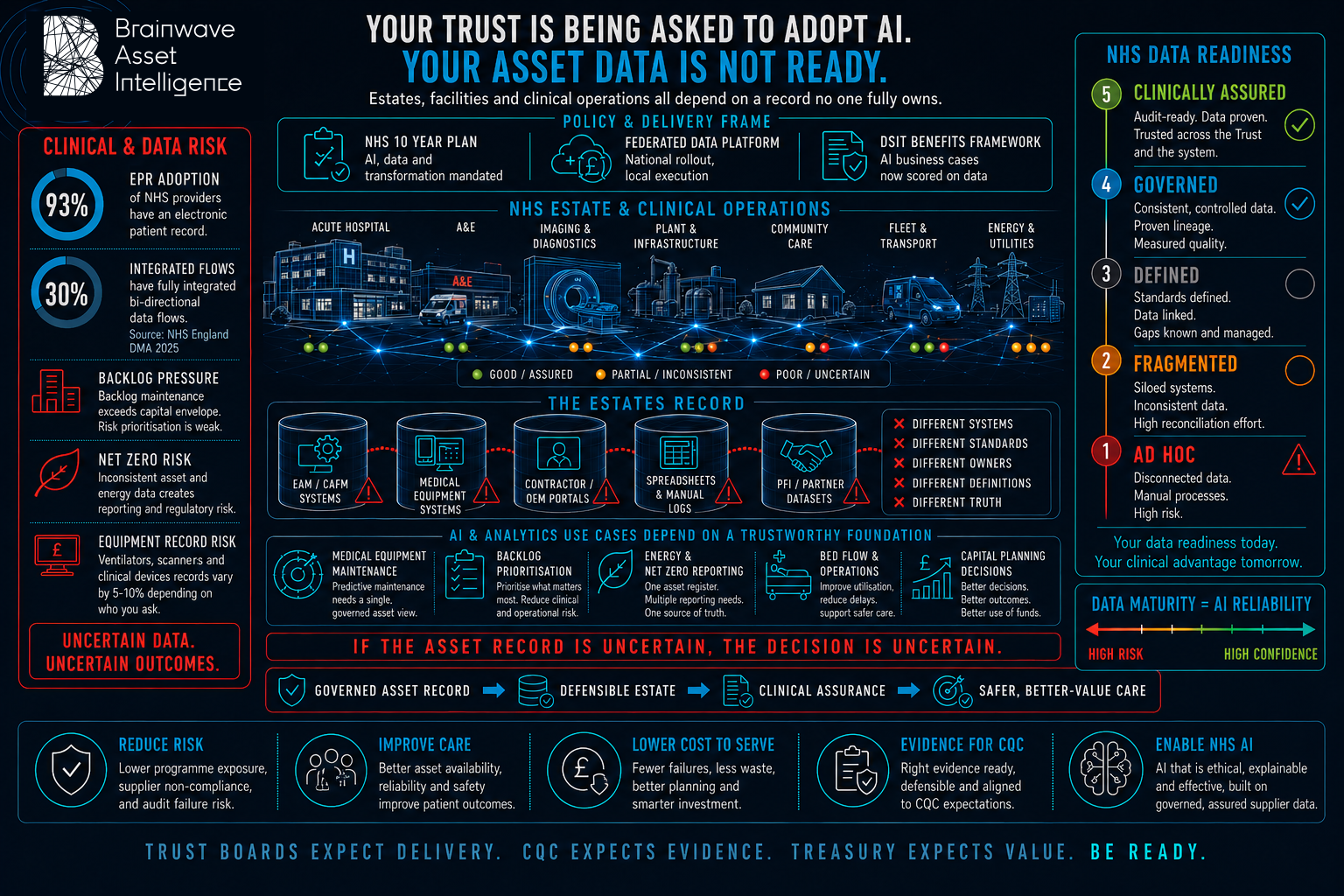

AI in health is not a technology risk.

It is a data governance risk.

NHS Trusts face intense pressure to adopt AI, and equally intense scrutiny when things go wrong. We help health organisations close the gap between AI ambition and the governance, data quality, and readiness that safe adoption demands.

Why health AI initiatives stall before they start

Data Trust Index scores expose the gap

Most Trusts cannot quantify the quality of their asset and operational data. Without a baseline, procurement decisions rest on assumptions.

Regulatory exposure is not theoretical

Ungoverned AI in a clinical or operational context creates direct regulatory liability. We design governance frameworks that satisfy CQC and Board audit scrutiny.

Legacy estates make asset data complex

Multi-site, mixed-vintage estates with disparate CAFM and CMMS platforms require normalisation before any AI deployment can be trusted.

Our services for NHS & Health

Strategic Advisory

AI readiness assessments, data maturity benchmarks, and sector-specific roadmaps aligned to your operational reality.

Learn more →Data Transformation

Asset data cleansing, taxonomy alignment, EAM and IWMS modernisation, and structured data foundations that AI can actually use.

Learn more →Managed Services

Data Governance as a Service (DGaaS), continuous AI oversight, model monitoring, and responsible governance on a retainer.

Learn more →Built on the Asset Intelligence Framework

Three pillars. One integrated approach. Governance, Data Maturity, and AI Readiness, assessed together and delivered with SAFE-AI™ governance active whenever AI is in scope.

What organisations in NHS & Health ask us

What data governance does an NHS Trust need before deploying AI on its estate?

Before any AI deployment, a Trust needs a validated asset register with consistent taxonomy, a data quality baseline against a recognised framework, and governance structures that satisfy both CQC inspection standards and Board-level accountability requirements. Deploying AI before these foundations are in place creates regulatory exposure rather than clinical benefit.

How does CQC assess AI governance in NHS Trusts?

CQC scrutinises AI governance under the Well-Led inspection domain. Inspectors expect Trusts to demonstrate that AI systems are explainable, that data inputs are validated, and that human oversight is documented and enforced. Trusts unable to produce auditable AI governance evidence face direct regulatory risk regardless of the AI tool's clinical performance.

Why do NHS AI pilots succeed in trials but fail to scale?

Pilots typically run on curated, cleaned datasets managed by the pilot team. Scaled deployment across a full estate — with multi-system, mixed-vintage data from disparate CAFM and CMMS platforms — exposes data quality problems that pilot conditions never encountered. Scaling AI requires scaling the underlying data governance, not just the technology.

What does a Brainwave Asset Intelligence NHS engagement look like in practice?

We begin with a structured AI readiness assessment covering asset register quality, data governance maturity, and CAFM and CMMS architecture. This produces a scored baseline and a prioritised roadmap. Engagements then move into data transformation — cleansing records, aligning taxonomies, establishing governance structures — before any AI tool is procured or deployed.

Ready to make AI safe and

clinically credible in your Trust?

Be ready.